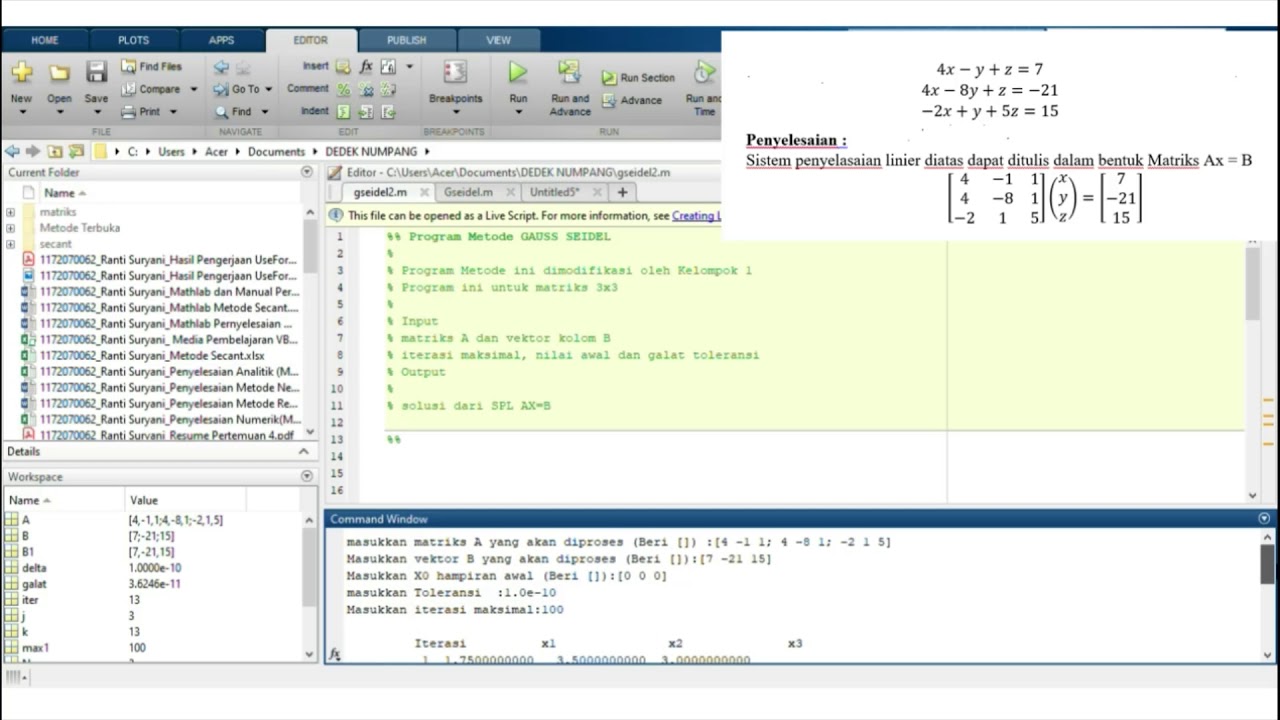

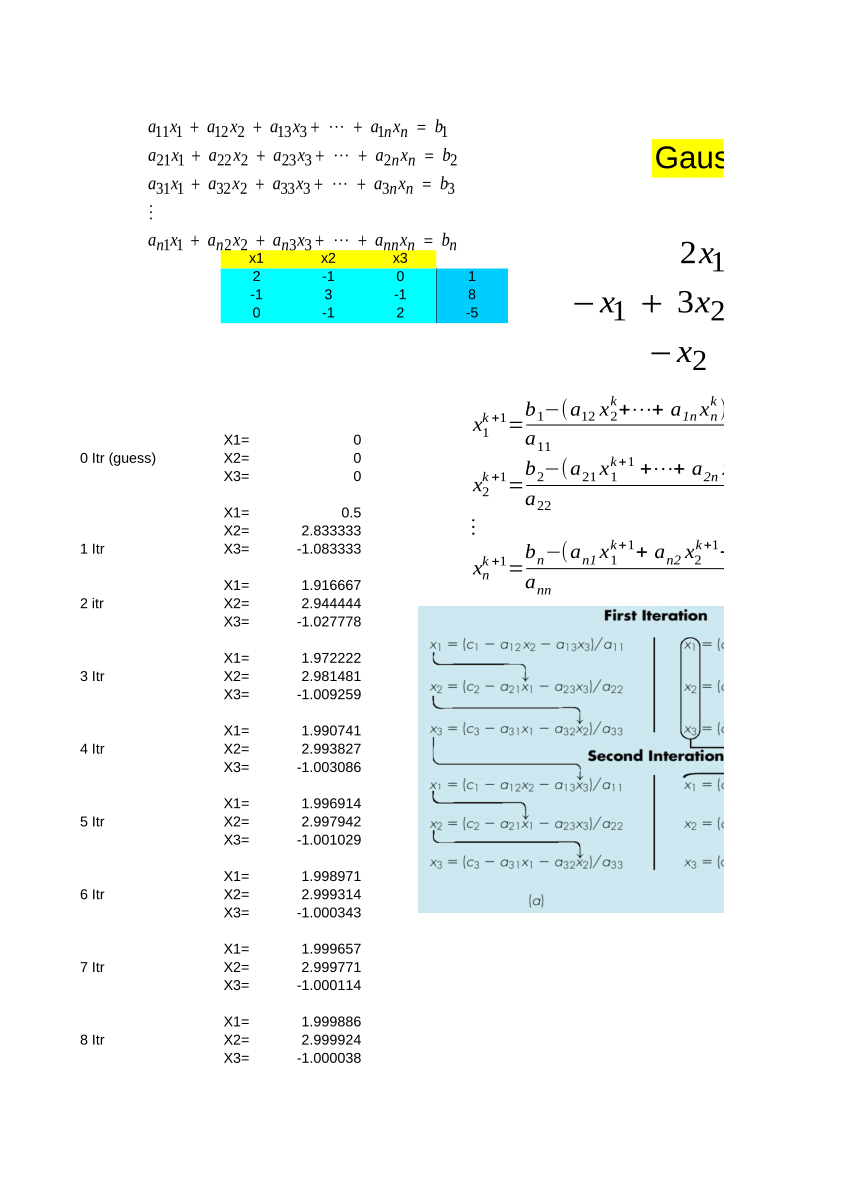

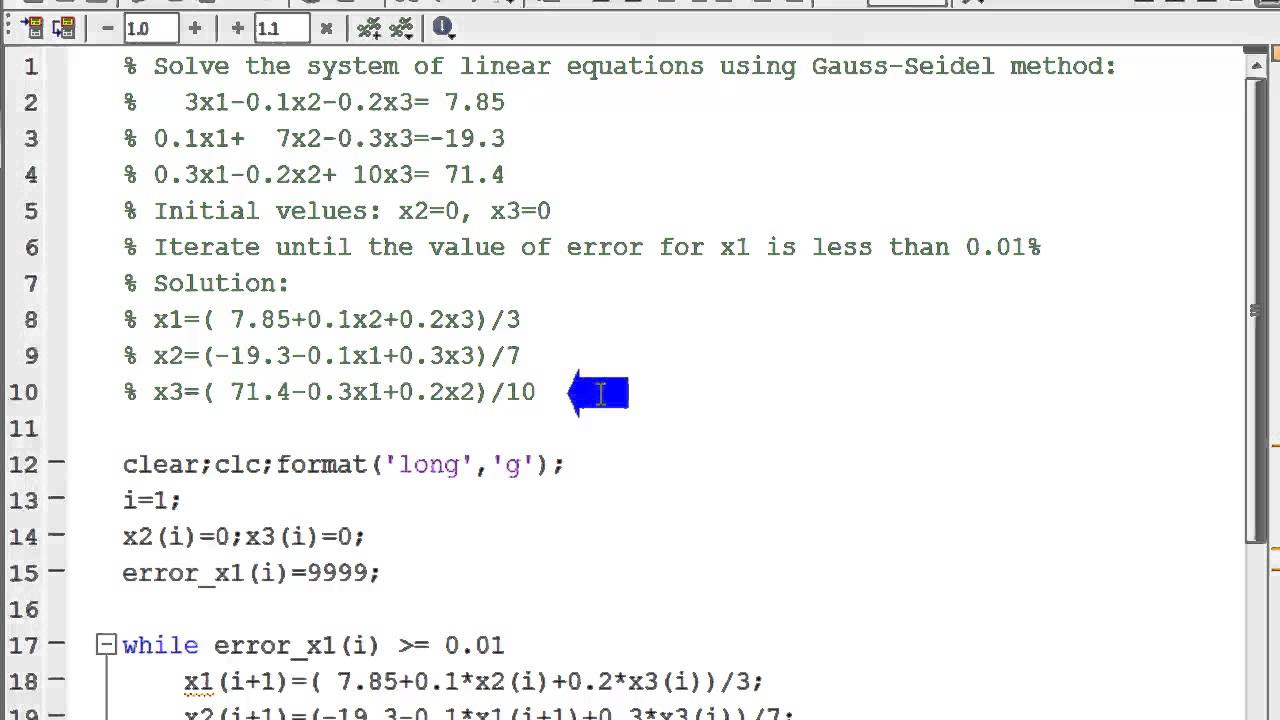

#from matplotlib import pyplot as plt, cm, colorsī -= np.dot(np.dot( inv(np. Instead of **-1 on the matrix to calculate the inverse matrix import scipy You go wrong in the code of beta update: it should be B = B - np.dot(np.dot( inv(np.dot(Jft, Jf)), Jft), r) I'm having trouble understanding where my problem is, and any help would be appreciated. The sum of the squares of the residuals increases rather than tends towards 0 at each iteration and my resulting B vector increases. The solver R 2can be chosen as the weighted Jacobi iteration R 2 D1( 0:5 is recommended as the default choice) or the symmetric Gauss-Seidel iteration R 2with R 2 (D +L)1.

We only discuss the realization of step 2.

SumOfResid = sumOfResid + (r * r)ī = B + np.dot((np.dot(Jft,Jf)**-1),(np.dot(Jft,r))) Iterative techniques are rarely used for solving linear systems of small dimension because the computation time required for convergence usually exceeds that required for direct methods such as Gaussian elimination. The matrix form of step 1 and 3 is trivial. Jf = np.zeros((rows,cols)) # Jacobian matrix from r Rate = ī = np.matrix(,]) # original guess for B The following is what I have done so far: import scipyįrom matplotlib import pyplot as plt, cm, colors This question is a follow-up to a recent question posted regarding MATLAB being twice as fast as Numpy. I'm relatively new to Python and am trying to implement the Gauss-Newton method, specifically the example on the Wikipedia page for it ( Gauss–Newton algorithm, 3 example). Improving Numpy speed for Gauss-Seidel (Jacobi) Solver.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed